Trust by Design – Showing What’s Verified vs Generated

Trust in banking doesn’t come from intelligence — it comes from clarity.

When people check their finances, they’re not just looking for information; they’re looking for reassurance that what they see is real. In traditional banking apps, that reassurance is built into the interface itself. A balance appears in a defined, labeled space, and over time, users learn to trust that structure.

Conversational AI changes that dynamic. When interactions become fluid and language-driven, the visual cues that signal reliability start to disappear. If the system is fluent enough, users can’t easily tell what’s a verified value and what’s a generated explanation. The experience feels natural, but that same naturalness makes it harder to know what to trust.

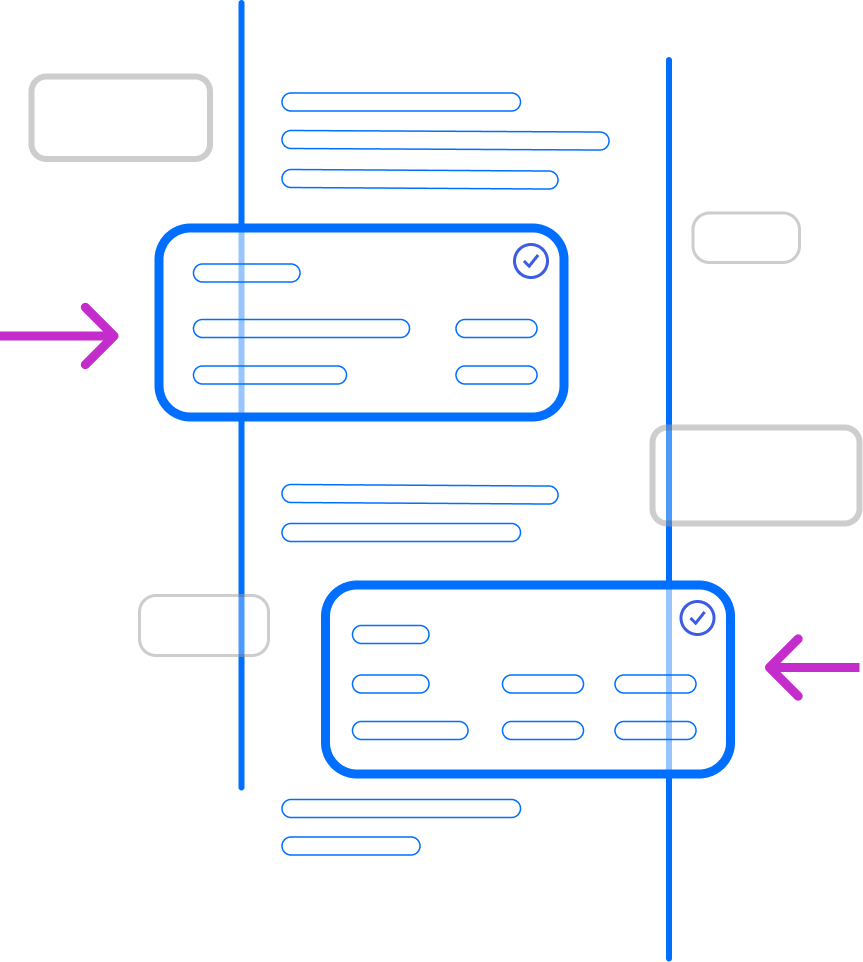

This is where orchestration shifts the design. Instead of blending everything into a single conversational stream, it makes the boundaries explicit. Verified data and AI guidance are presented differently, so users can immediately see what comes from the system of record and what comes from the model.

That distinction isn’t explained, it’s shown. And that’s what builds confidence. When users can see where information comes from, they don’t have to second-guess it. The interface does the work for them, turning trust from something users have to evaluate into something they can simply recognize.

The Two Layers of Trust

The interface distinguishes between two clearly marked layers of information:

This separation ensures that AI’s voice never competes with the system’s truth — they coexist, but clearly in different roles.

UX Principles for Designing Trust-Centered Interfaces

- Label the Source, Not Just the Output

- Every piece of information carries a “source identity”, system, AI, or human. Visual consistency builds intuitive recognition over time.

- Confidence Through Contrast

- Verified components are static and precise. AI guidance is dynamic and conversational. The contrast helps users subconsciously categorize reliability.

- Explainable Interactions

- Tooltips or microcopy such as “Data shown from your account as of 10:32 AM” or “This insight was generated based on your past three months of spending” create lightweight explainability.

- Predictable Behaviors

- Avoid sudden context changes or unsolicited actions. Predictability reinforces user control, which research shows is a core driver of trust (Benk et al., AI & Society, 2025).

- Honesty in Uncertainty

- If the AI doesn’t have enough data, it should say so: “I don’t have access to this information right now.” This humility, paradoxically, increases user trust.

- “No Black-Box Math” Principle

- AI should never generate or present calculated financial outcomes directly. Instead, it should surface verified calculators or tools connected to the bank’s systems. This maintains transparency, ensures auditability, and strengthens user confidence in every numeric output.

- Similarly, when AI configures verified calculators or planners (e.g., setting a 12-month horizon), it passes only display parameters, never financial data or computed values.

Turning Compliance into Confidence

Trust design isn’t just an aesthetic choice, it’s a compliance strategy.

When the interface clearly distinguishes between verified data and AI-generated guidance, it does more than improve usability. It creates a system that is easier to govern, easier to explain, and easier to trust. Every value can be traced back to a source, users can quickly understand what to rely on and what to interpret, and the bank demonstrates transparency as part of the experience, not as a disclaimer after the fact.

This approach aligns with the direction regulators are already moving in. Across jurisdictions, the expectation is consistent: systems should be transparent, traceable, and understandable to the people using them. Whether it’s the EU AI Act’s requirement to disclose AI-generated content, the FCA’s focus on clear and informed decision-making, or broader U.S. regulatory guidance around oversight and accountability, the message is the same.

Interface orchestration takes those abstract requirements and makes them visible. Instead of treating compliance as something behind the scenes, it becomes part of the user experience, built into how information is presented, understood, and trusted in real time.

A Simple Example

User: “Can I pay my loan early?”

System: Opens the Early Payment Calculator, a verified component directly connected to the loan management system.

It pre-populates:

- Outstanding Balance: €7,800

- Current APR: 6.8%

- Next Payment Due: Oct 25

AI Guidance: “I’ve opened your Early Payment Calculator — it shows how different payment amounts affect your total interest. You can adjust the slider to explore options.”

Labels:

- “System-Verified Data”

- “AI Guidance — Context only, no calculations performed”

The user sees both intelligence and integrity — one reinforces the other.

The Outcome

The result of trust-calibrated design isn’t just a safer interface, it’s a calmer one.

Users no longer have to wonder “Can I trust this?” because the interface answers that question visually, continuously, and confidently.

In banking, where emotional security and financial accuracy overlap, this isn’t just UX excellence, it’s digital empathy built into the system itself.

The Experience – From Conversation to Confidence

Designing for trust doesn’t just happen in architecture diagrams — it happens in moments.

Moments when customers ask questions that carry emotion: “Can I pay this off early?”, “Am I saving enough?”, “Will I have enough left this month?”

The Interface Orchestration Model transforms those moments from uncertainty into clarity — not by giving more information, but by presenting it in the right way, at the right time, through the right interface.

A Day in the Life of an Orchestrated Experience

Scenario: Maria, a customer juggling multiple accounts and a credit card balance.

1. The Intent

Maria types: “Why am I paying so much interest?”

- The AI interprets the intent as “optimize debt repayment.”

- It recognizes the relevant data source: her credit card account.

- It routes the request to the Debt Optimizer component.

2. The Interface Invocation

The Debt Optimizer opens instantly.

Data appears live from the bank’s core system:

- Balance: €2,350

- APR: 22.9%

- Suggested alternative: Credit line at 11.5% APR

The data is labeled: “Verified by Core System (as of 09:32 AM).”

3. AI Guidance (Contextual Framing)

The AI adds a brief contextual explanation:

“I’ve opened your Debt Comparison Tool — it’s showing your current credit-card rate (22.9%) and the available credit-line rate (11.5%). You can explore how different transfer amounts affect your monthly costs..”

4. Trust Reinforced by Transparency

Maria can see exactly which parts of the screen are system-verified and which are AI advisory.

- She doesn’t have to wonder if the numbers are real — the design answers that question upfront.

- The assistant offers: “Would you like to see how this affects your next payment?” — and on acceptance, opens another verified component

5. Outcome

Maria feels informed, not manipulated.

She can act confidently because she knows where each piece of information comes from.

The conversation feels natural — but it’s grounded in system integrity.

Why This Experience Feels Different

Traditional chatbots provide answers; orchestration provides understanding.

The difference is subtle but profound:

- Maria isn’t reading a paragraph of AI text; she’s interacting with a living dashboard that adapts to her context.

- The AI doesn’t try to be the bank; it helps her see the bank more clearly.

- Transparency isn’t a footnote — it’s a design feature.

This shifts the emotional tone of digital banking from cautious verification (“Can I trust this?”) to calm confidence (“I know this is correct.”).

UX Mechanics That Support Confidence

Continuity of Context

The conversation and UI evolve together, users never feel transported to a “different” system. This continuity reduces cognitive friction, a key factor in financial anxiety reduction.

Predictable Transitions

When the AI opens a component, it does so visibly — showing the handoff between guidance and verified data. This predictability builds familiarity and long-term trust.

Empathetic Tone

The AI uses language that acknowledges uncertainty and provides reassurance without overpromising.

Visible Provenance

Every visible number includes its origin (“as of” timestamp, system name), following best practices from EU AI Act Article 50, which requires “clear identification of AI-generated or manipulated content.”

Beyond Convenience — Emotional Design for Trust

When designed well, orchestration doesn’t just prevent hallucination; it humanizes precision.

By visually separating verified truth from AI guidance, it reduces anxiety and gives users a sense of control, one of the strongest psychological levers for financial wellbeing.

In essence:

“The experience feels conversational, but the confidence feels institutional.”

That’s the sweet spot, where innovation and compliance meet empathy.

Why This Matters for Banks

For banks, this approach achieves three outcomes that traditional AI interfaces can’t:

- Measurable trust — fewer errors, fewer escalations, higher user confidence.

- Humanized compliance — transparency becomes a UX feature, not a legal disclaimer.

- Customer retention through calm — when users feel safe, they stay engaged.

Up next, the conclusion of the series covering next steps and scaling – how to build this safely, incrementally, and sustainably.

Read it here:

AI Interface Orchestration for Retail Banking - Part 4

Catch up on the part 1 and part 2 below:

AI Interface Orchestration for Retail Banking - Part 1

AI Interface Orchestration for Retail Banking - Part 2

Stay up to date on the latest insights from Electric Mind by following us.

.png)